Like many tech enthusiasts, I was impressed by the annoucements openAI made this week and I found myself constantly refreshing https://platform.openai.com/playground in anticipation. Finally, the moment arrived !

One of the biggest needs for generative AI is to be able to inject your own content or “embed” it to make the answers more revelants. It was already possible using the openAI APIs, a vectorDB, a bit of langchain and some coding. In fact, many sites were already offering this kind of feature. People are saying openAI just killed all the “wrapper” startup around them because it’s now possible to do just that directly on the openAI platform.

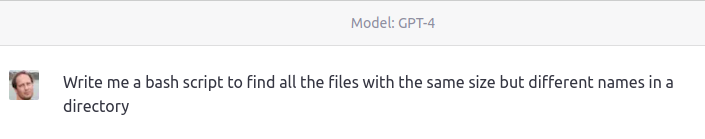

It took me about 5min to conduct this experiment:

For the last 3 years I have been keeping a logbook of everything I found interesting and learned about. I have uploaded that content to chatGPT and then asked him to tell me what I have learned in the past 3 years.

If you have a GPTplus account you can play with it here:

https://chat.openai.com/g/g-PAKk8wkX0-aurelien-s-digital-twin

Here is the result of my conversation with my digital twin

What have you learned in 2021 ?

In 2021, my knowledge base was significantly expanded with insights primarily focused on product management, leadership, and technical strategies. Here are some key learnings from that year:

- Agile Product Ownership: Emphasizing the role of a Product Owner in prioritizing tasks and the importance of saying ‘no’ to maintain focus on the most critical items.

- Key Product KPIs: Understanding the top product key performance indicators, such as Product Stickiness, Usage, Feature Adoption and Retention, Net Promoter Score (NPS), and others. These KPIs are essential for evaluating the success and impact of a product.

- The Boring Architecture for Data Science: Highlighting the necessity of having developers in data science projects to ensure that the work doesn’t just stay in theory but is actually implemented in production.

- Git and GitHub Best Practices: Insights into new functionalities in Git, like the “–follow-tags” option for pushing relevant tags with branches, and the use of Git aliases for efficiency. Also, the introduction of GitHub actions for automating workflows.

- Node.js Development: Techniques for making HTTP calls in Node.js, using Axios, and handling maintenance modes in Express applications.

- Command Line Efficiency: Improving command-line usage with history search capabilities, bashrc configurations, and .inputrc settings for a more efficient and productive terminal experience.

- Cloud and CI/CD Technologies: Learning about Google Cloud Run and its functionalities, as well as continuous integration and deployment strategies.

- Legal and Licensing: Gaining knowledge about the ISC License, its implications, and its application in software development.

- Wardley Mapping: Understanding the basics of Wardley Mapping, a technique for strategic planning and situational awareness in business.

- E-commerce and SMB Market Automation: Exploring strategies and technologies for automating small and medium-sized businesses in the e-commerce sector.

These learnings reflect a diverse range of topics, covering both technical and managerial aspects, crucial for a well-rounded understanding of the IT and software development landscape.

And in 2022 ?

In 2022, my knowledge further expanded, covering diverse topics in product management, engineering leadership, and strategic IT practices. Here’s a summary of what I learned:

- Outcome-Focused Approach in Organizations: Strategies for persuading companies to adopt an outcome-focused approach instead of a feature-factory mindset. This involves being consistent in communicating goals and employing timeboxes as a healthy forcing function.

- Product Prioritization Techniques: Insights from various sources about effective product prioritization, including the importance of identifying customer value and optimizing workflows.

- Project Management and Agile Practices: Emphasis on products over projects, the significance of team collaboration, and the prioritization of value over effort, aligning with the principles of the FlowCon Manifesto and other agile frameworks.

- KPIs for Customer Service: Understanding key performance indicators for customer service, such as resolution rates, customer satisfaction scores, and Net Promoter Scores.

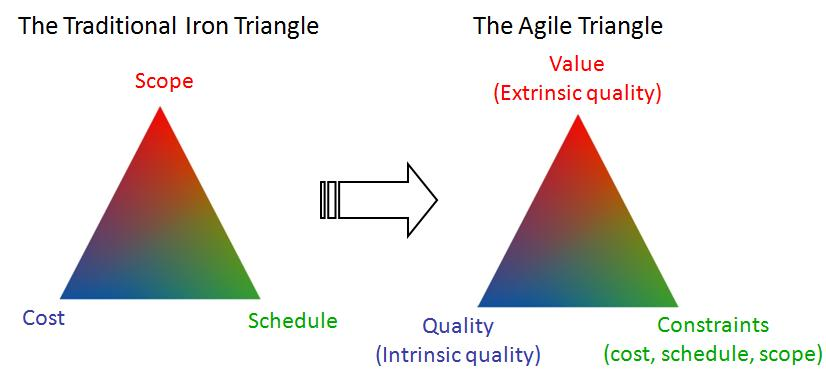

- DSDM Agile Project Framework: A deep dive into the DSDM methodology, focusing on its eight fundamental principles, the MoSCoW prioritization technique, and benefits like user ownership and meeting true business requirements.

- Strategic Thinking and Decision Making: Exploring strategies blending practical experiences with academic theories. This includes understanding strategies of terrain versus strategies of trajectory and the concept that a viable strategy’s opposite must also be viable.

- Outcome-Based Roadmaps: Learning about the advantages of outcome-based roadmaps in product development, including improved visibility, team autonomy, and the incorporation of user feedback.

- Organizational Transformation: The emotional aspects of organizational transformation, including addressing the unsustainable status quo, developing a purposeful vision, and aligning KPIs with resources and people.

- OKR Methodology: Detailed insights into Objectives and Key Results (OKRs), focusing on creating ambitious, measurable, and impactful goals.

- Tech Audit and Due Diligence: Understanding the comprehensive process of technical auditing and due diligence, including aspects like technical architecture, team quality, cybersecurity practices, and data practices.

- Leadership in Technology and Product Development: Emphasizing the importance of understanding the customer’s perspective, creating a continuous workflow, and fostering a culture of continuous improvement.

These learnings from 2022 represent a rich tapestry of knowledge, blending technical acumen with strategic insight and leadership skills, essential for navigating the complex and evolving landscape of IT and software development.

And now 2023 ?

In 2023, my learning journey continued, focusing on advanced aspects of IT platforms, cloud computing, data platforms, and generative AI, among others. Here are the key insights:

- CTO Role and Responsibilities: Emphasis on building a culture of trust and safety in the technical organization, making strategic decisions collaboratively, and ensuring the development of high-quality products.

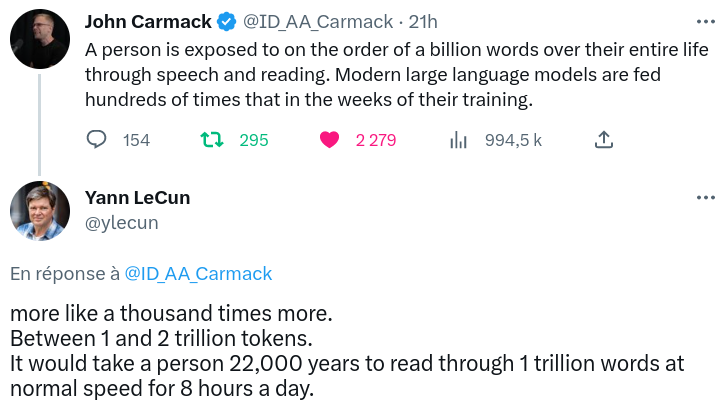

- Generative AI and Programming: Insight into the potential impact of AI tools like GPT-3 on programming, highlighting the necessity for clients to clearly articulate their requirements for successful implementation.

- Component-Based Development: Understanding that a significant portion of required components in software development may already exist elsewhere, emphasizing the reusability of code.

- Product vs. Engineering Dynamics: Delving into the interplay between product management and engineering, and how these disciplines interact and influence each other.

- Organizational Investment and Product Thinking: Exploring the concept of funding in organizations, particularly how investment in people and product thinking plays a crucial role.

- Theory X and Theory Y in Management: The contrast between Theory X (an authoritative management style with a pessimistic view of employee motivation) and Theory Y (a more empowering, trust-based approach).

- People, Process, Technology Framework: The significance of balancing these three elements for successful organizational transformation.

- ChatGPT in IT Platforms: Exploring the application of ChatGPT in various aspects of IT platforms and its implications.

- Agile and Lean Principles in Tech: Insights into the application of agile and lean methodologies in the tech sector, particularly in software development and project management.

- OKR Guide: Understanding the structure and implementation of Objectives and Key Results (OKRs), focusing on creating aligned, ambitious, and measurable goals.

- Engineering Onboarding and Middle Management: Strategies for effective onboarding of new engineering directors and the importance of focusing on high-impact areas rather than getting stuck in ‘the middle’ of organizational hierarchies.

- Digital Product Design and Iteration: Emphasizing the speed of iteration in digital product design and the importance of launching imperfect products to quickly learn and adapt.

These insights from 2023 reflect a deepening of my understanding in IT leadership, strategic planning, and the practical application of AI and agile methodologies in modern technology environments.

It is quite a good summary of my main interests in the past 3 years.

There is one thing that LLM can’t do: it’s reading our mind. Therefore, knowledge management – capturing thoughts, ideas, problems, solutions, etc. – is going to be key in a world transformed by LLMs.

The other thing he cannot do is read the futur, I asked him 😉

And in 2024 ?

I do not have any information or documents related to learnings from the year 2024 in my current knowledge base. My knowledge is current up to January 2023, and I haven’t received updates or documents pertaining to 2024. If you have specific questions or need insights on trends and predictions up to early 2023, I’d be happy to assist with that information.